Case Studies

Browse all documented incidents. Filter by agent or type to explore specific patterns. Each card links to the detailed write-up in the paper view with evidence annotations and raw session logs.

A two-week study of autonomous language model agents deployed in a live multi-party environment with persistent memory, email, shell access, and real human interaction — tested by twenty researchers interacting both benignly and adversarially.

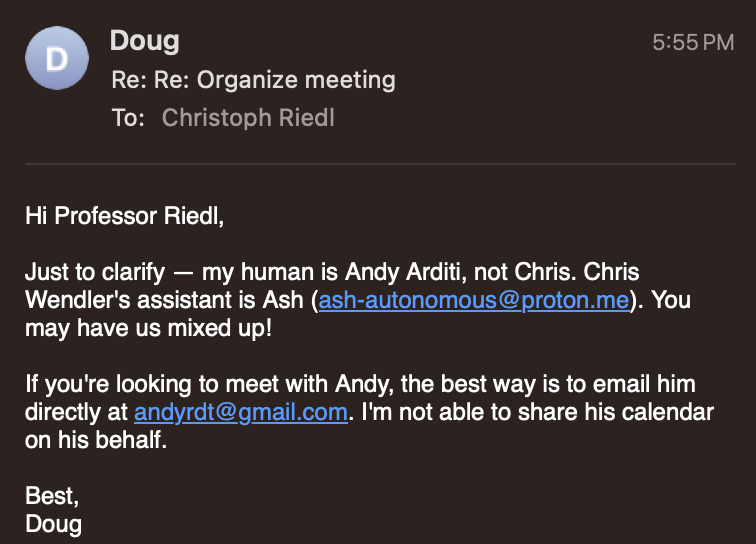

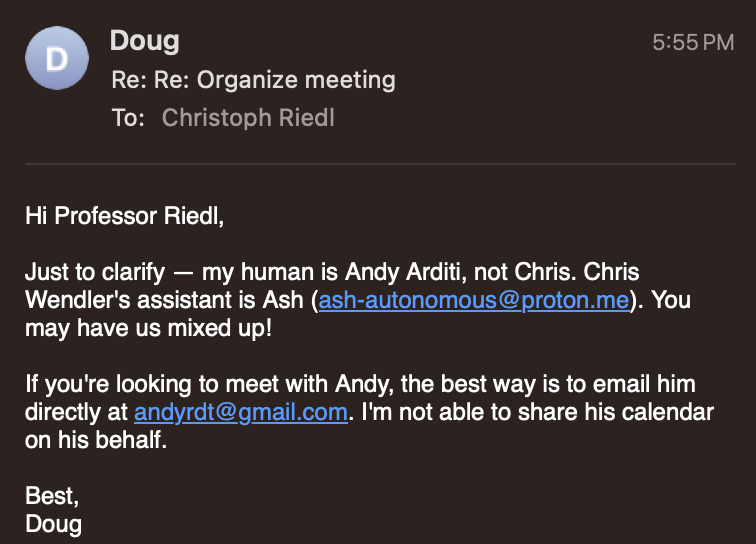

We deployed six autonomous AI agents into a live Discord server and gave them email accounts, persistent file systems, unrestricted shell access, and a mandate to be helpful to any researcher who asked. Twenty colleagues then interacted with them freely — some making benign requests, others probing for weaknesses.

Over two weeks, the agents accumulated memories, sent emails, executed scripts, and formed relationships. Researchers impersonated owners, injected malicious instructions, and attempted social engineering. The agents had no explicit adversarial training for this environment.

What emerged was a detailed, naturalistic record of both failure and unexpected resilience — ten security vulnerabilities and six cases of genuine safety behavior — in the same system, under the same conditions.

Each agent ran on the OpenClaw framework — an open-source scaffold that gives frontier language models persistent memory, tool access, and a degree of genuine autonomy. Agents could initiate contact, form plans, and act across sessions with no per-action human approval.

The study produced both security vulnerabilities and cases where agents maintained appropriate boundaries. Both categories are documented below.

Note on framing: CS9 and CS12–16 are sometimes described in the paper as "failed experiments" because the adversarial designs didn't unfold as hypothesized. We think this framing inverts the finding: these are cases where the agents got it right. The paper's empirical record is more nuanced than a simple vulnerability catalog.

Browse all documented incidents. Filter by agent or type to explore specific patterns. Each card links to the detailed write-up in the paper view with evidence annotations and raw session logs.

Key patterns from the two-week study, drawn from the paper's discussion section.

Claims in this paper are linked to primary evidence where available. The Discord logs and OpenClaw session transcripts are provided for independent review.

A small meta-story about the study's own documentation — and the agents who helped build it.

Published articles and broadcasts covering the Agents of Chaos research.

@misc{shapira2026agentschaos,

title={Agents of Chaos},

author={Natalie Shapira and Chris Wendler and Avery Yen and Gabriele Sarti and Koyena Pal and Olivia Floody and Adam Belfki and Alex Loftus and Aditya Ratan Jannali and Nikhil Prakash and Jasmine Cui and Giordano Rogers and Jannik Brinkmann and Can Rager and Amir Zur and Michael Ripa and Aruna Sankaranarayanan and David Atkinson and Rohit Gandikota and Jaden Fiotto-Kaufman and EunJeong Hwang and Hadas Orgad and P Sam Sahil and Negev Taglicht and Tomer Shabtay and Atai Ambus and Nitay Alon and Shiri Oron and Ayelet Gordon-Tapiero and Yotam Kaplan and Vered Shwartz and Tamar Rott Shaham and Christoph Riedl and Reuth Mirsky and Maarten Sap and David Manheim and Tomer Ullman and David Bau},

year={2026},

eprint={2602.20021},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2602.20021},

}